writing

A Visual Exploration of Neural Radiance Fields

Apr 2023

Generating new views of a scene from limited input images, known as novel view synthesis, is a challenging problem in computer vision and graphics. Traditional methods are force to choose either flexibility or realism. Neural Radiance Fields (NeRF) is a new approach that learns the 3D structure and appearance of a scene directly from a sparse set of 2D images, enabling high-quality, photorealistic view synthesis. This post aims to provide a clear intuition of how NeRFs work and the new trade-offs involved in using this approach.

Volume Rendering

Before diving into the details of NeRF’s functionality, it is essential to establish a better understanding of 3D object representation. A “volume” is a dataset spanning three spatial dimensions, denoted as , and can include several “features” for each point in space, such as density or texture. Unlike meshes, which are defined solely by their surface structure, volumes are characterized by both their surface and interior structure. This property makes volumes a natural choice for representing semi-transparent objects like clouds, smoke, or liquids. For our purposes, let us define the “interesting” features for our volume as a color and a density .

Rendering a 2D image from a volume involves computing the “radiance” - the reflection, refraction, and emittance of light from an object to a particular observer - which can then be used to generate an pixel grid depicting the appearance of our model.

A common technique for volume rendering, and the one employed in NeRF, is Ray Marching. Ray Marching constructs a series of rays, defined as , with an origin vector and a direction vector for each pixel. We then sample along this ray to obtain both a color, , and a density, .

To form an RGBA sample, a transfer function (from classical volume rendering 1) is applied to these samples. This transfer function progressively accumulates from the point closest to the eye to the point where we exit our volume. We can conceptualize this process discretely as follows:

where , , and is the sampling distance.

The transfer function in volume rendering is designed to capture the intuitive concept that objects occluded by dense or opaque materials will contribute less to the final rendered image compared to objects obscured by less-dense or transparent materials. For example, consider a scene with a glass vase containing a bouquet of flowers. The flowers positioned behind the transparent glass will be more visible and contribute more to the rendered image than flowers positioned behind a dense, opaque ceramic vase.

The rendering component must be differentiable to facilitate efficient optimization through techniques such as gradient descent 2. This differentiability allows the rendering process to be fine-tuned based on the discrepancy between the rendered image and the target image.

However, the true innovation of NeRF lies not in the rendering approach itself, but rather in the method of capturing and encoding the volume. NeRF addresses two key challenges:

-

How can the volume be encoded to allow querying at any arbitrary point in space? This enables the rendering of the scene from novel viewpoints not present in the original dataset.

-

How can the encoding account for non-”matte” surfaces? Matte surfaces exhibit diffuse reflection, where incoming light is scattered uniformly in all directions. However, many real-world objects have non-matte surfaces, such as glossy or specular materials that exhibit more complex reflectance properties. For example, a NeRF model trained on images of a shiny metal sculpture should be able to capture the specular highlights and reflections present on the sculpture’s surface.

While the differentiable rendering component is essential for optimization, the true innovation of NeRF resides in its approach to encoding the volume, enabling query-ability at arbitrary points in space and accounting for non-matte surface properties.

Volume Representation

Let us first consider a naive approach for encoding volumes - voxel grids. Voxel grids apply an explicit discretisation to the 3D space, chunking up into cubes of space, and storing the required metadata in an array (often referencing a material that includes colour, and viewpoint-specific features which the ray marcher must take into account). The challenge with this internal representation is that it takes no advantage of any natural symmetries and the storage and rendering requirements explode in . We also need to handle the viewpoint-specific reflections and lighting separately in the rendering process which is a challenge in itself.

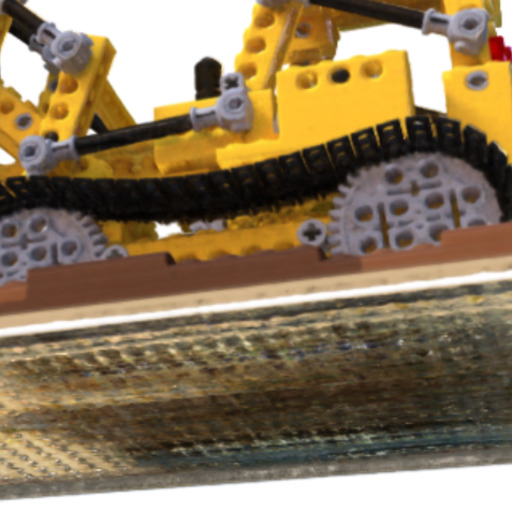

You can get a good feel for the performance impact by increasing the level of granularity in the Sine-wave Voxel Grid. I encourage you to compare this level of detail and fluidity to our NeRF Lego bulldozer.

Neural Radiance Fields (NeRF)

What is volume if not a function mapping? Given that there are clear symmetries in any particular volume, we’d like a method to learn to exploit those symmetries. Densely connected neural networks, which consist of multiple layers of interconnected nodes with nonlinear activation functions like Rectified Linear Units (ReLU) 3, are excellent approximators when given sufficient data.

Neural Radiance Fields (NeRF) 4 use densely connected ReLU Neural Networks (8 layers of 256 units) to represent this volume. The resulting Neural Network, , can output an value for each spatial/viewpoint pair. Implicitly this encodes all of the material properties that normally have to be manually specified, including lighting, reflectance, and transparency. This allows NeRF to learn a continuous, high-fidelity representation of a scene from a set of input images. Put more formally we can see this as:

Optimizing

To optimize the NeRF model, a volume renderer is used to generate a 2D image from the learned 3D representation. This is done by ray marching through the volume and accumulating the color and density values along each ray. The resulting 2D image is then compared to the ground truth image, and the difference is used to compute a pixel-squared error loss. By minimizing this loss over the model parameters using gradient descent, the NeRF model learns to accurately represent the 3D scene.

NeRF has been successfully applied to a variety of tasks, including novel view synthesis 4, dynamic scene representation 5, and large-scale scene reconstruction 6. Its ability to learn high-fidelity, continuous representations of scenes from a set of input images makes it a powerful tool for many computer vision and graphics applications.

Challenges

Data Requirements and Training Times

One of the significant challenges associated with NeRFs is the substantial amount of data required to train a model effectively. There is no prior knowledge in basic NeRF models. Instead, each model is trained from scratch for each scene we want to synthesise.

The data requirements can vary depending on factors such as scene complexity and the desired quality of the rendered output. For example, a simple object with a uniform texture may require fewer training images compared to a complex outdoor scene with intricate details and varying lighting conditions.

Real-world case studies have shown that training a high-quality NeRF model can take several hours or even days, depending on the size of the dataset and the available computational resources. For instance, the original NeRF paper 4 reported training times of around 1-2 days for scenes with 100-400 images using a single GPU. These extended training times can be a limiting factor for practical applications, especially when dealing with large-scale or dynamic scenes.

We can see the impact that has on both training time, and the data requirements in our training simulator.

Special Sauce

You may notice that the above examples don’t have the same clarity as published NeRFs. This is no accident, beyond just training time, state-of-the-art performance when optimising these densely connected networks requires a few additional techniques.

Held-out Viewing Directions

Rather than adding viewing direction to the original input to the function, it is best to leave the directions out of the first 4 layers. This reduces the number of view-dependent (often floating) artifacts that occur from a premature optimisation of a view-dependent feature before realising the underlying spatial structure.

Fourier Transforms

In the original NeRF implementation, the input to the neural network is not the raw RGB images and viewpoints, but rather a transformed representation using Fourier features. Fourier transforms are a mathematical tool that decompose a signal into its constituent frequencies. In the context of NeRFs, applying Fourier features to the input coordinates allows the network to capture high-frequency details that are essential for representing fine structures and textures.

The precise mechanism to utilise Fourier features is to apply a pre-processing step to transform the position into sinusoidal signals of exponential frequencies.

The network architecture for NeRF (Densely connected ReLU) is incapable of modeling signals with fine detail and fails to represent a signal’s spatial and temporal derivatives, which are essential to the physical signals. This is a similar realisation to the work of Sitzmann 7, although approached by pre-processing as opposed to changing the network’s activation function.

To visualize the effect of Fourier features, imagine a simple 1D signal with sharp edges and fine details. A standard ReLU MLP would struggle to accurately represent this signal, resulting in a smoothed or blurred output. However, by transforming the input coordinates using Fourier features, the network can effectively capture the high-frequency components of the signal, enabling it to represent sharp edges and fine details more accurately.

This seemingly arbitrary trick leads to substantially better results and removes the tendency to “blur” resulting images, allowing the network to accurately capture and represent the essential spatial and temporal derivatives of the physical signals.

The stationary property of the dot product of Fourier Features (of which Positional Encoding is one type) is essential for the ReLU MLP to learn and represent high-frequency components effectively. Stationarity means that the statistical properties of the dot product remain constant across different positions in the input space. In other words, the similarity between two points in the transformed space depends only on their relative positions, not on their absolute locations.

This stationarity property allows the ReLU MLP to learn a set of weights that can accurately capture the high-frequency components of the signal, regardless of their position in the input space. The learned weights of the ReLU MLP correspond to a set of periodic functions that can effectively represent the high-frequency components of the transformed signal.

Hierarchical Sampling

One of the first questions that likely came to mind when talking about classical volume rendering was why we were using a uniform sampling system when the vast majority of models will have large quantities of empty space (with low densities) and diminishing value from samples behind dense objects.

The original NeRF 4 paper handles this by learning two models simultaneously - a coarse-grained model that provides density estimates from particular position/viewpoint pairs and a fine-grained model identical to the original model. The coarse-grained model is then used to re-weight the sampling of the fine-grained model and generally produces better results.

Conclusion

With this article, you should have obtained an overview of the original Neural Radiance Field paper, and developed a deeper understanding of how they work. As we have seen, Neural Radiance Fields offer a flexible and powerful framework for encoding and rendering 3D scenes. While the original NeRF approach has some limitations, such as substantial data requirements and extended training times, ongoing research has led to various extensions and improvements that address these challenges and expand the capabilities of NeRFs. As the field continues to evolve, we can expect to see further advancements that make NeRFs more practical and accessible for a wider range of applications.

Footnotes

-

Volume rendering

Drebin, R.A., Carpenter, L. and Hanrahan, P., 1988. ACM Siggraph Computer Graphics, Vol 22(4), pp. 65—74. ACM New York, NY, USA. ↩ -

For the full details of how Ray Marching works, I’d recommend 1000 Forms of Bunny’s guide, and you can also see the code used for the TensorFlow renderings which uses a camera projection for acquiring rays and a 64 point linear sample. ↩

-

Rectified linear units improve restricted boltzmann machines

Nair, V., & Hinton, G. E. (2010). In Proceedings of the 27th international conference on machine learning (ICML-10) (pp. 807-814). ↩ -

Nerf: Representing scenes as neural radiance fields for view synthesis

Mildenhall, B., Srinivasan, P.P., Tancik, M., Barron, J.T., Ramamoorthi, R. and Ng, R., 2020. European conference on computer vision, pp. 405—421. ↩ ↩2 ↩3 ↩4 -

D-nerf: Neural radiance fields for dynamic scenes

Pumarola, A., Corona, E., Pons-Moll, G. and Moreno-Noguer, F., 2021. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 10318—10327. ↩ -

Nerf in the wild: Neural radiance fields for unconstrained photo collections

Martin-Brualla, R., Radwan, N., Sajjadi, M.S., Barron, J.T., Dosovitskiy, A. and Duckworth, D., 2021. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 7210—7219. ↩ -

Implicit neural representations with periodic activation functions

Sitzmann, V., Martel, J., Bergman, A., Lindell, D. and Wetzstein, G., 2020. Advances in Neural Information Processing Systems, Vol 33. ↩